This article shows how we can leverage entropy to detect LLM hallucinations efficiently.

The concept of entropy spans multiple fields of study. It first emerged in thermodynamics to measure how many different states are possible in a system, essentially quantifying its level of randomness. This foundational idea was later adapted by Claude Shannon for information theory, giving birth to what we now call "Shannon entropy."

Shannon Entropy

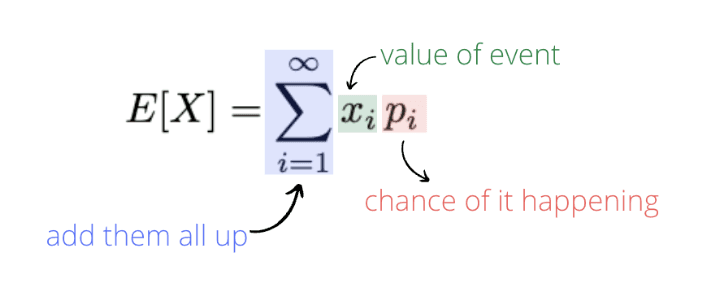

H(X) = -Σ P(xᵢ) log₂ P(xᵢ)The entropy H(X) measures uncertainty in a system X that has n different possible outcomes, with each outcome having its own probability P(xᵢ).

LLMs generate text by selecting tokens sequentially. For each selection, the model calculates probabilities for potential next tokens and typically chooses the most probable one (though this can be adjusted through steering settings). If you have access to these probability distributions the model generates, you can calculate the entropy at each token selection step.

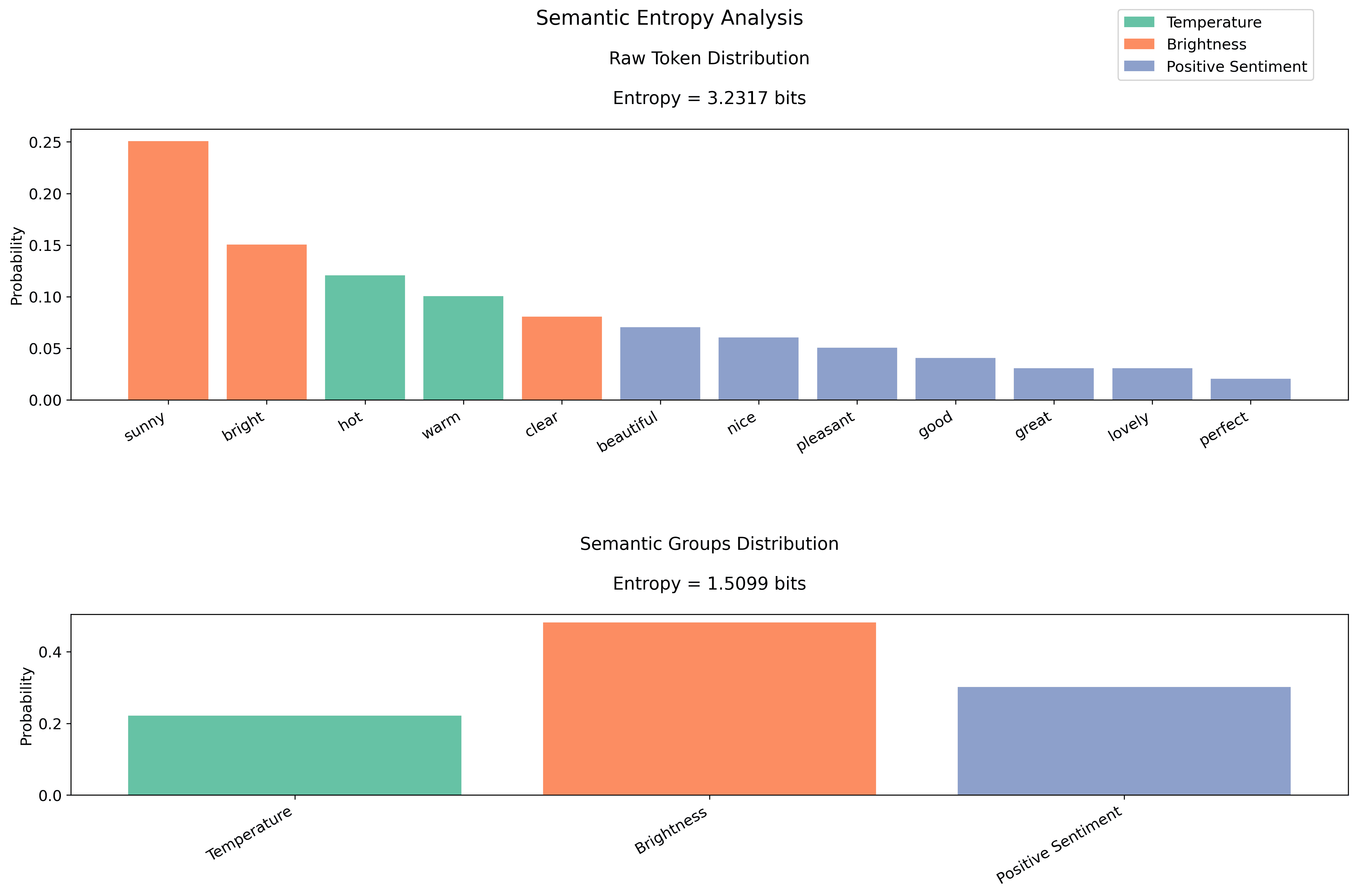

But looking at entropy calculations alone won't reveal if an LLM truly understands what it's saying. This limitation exists because human language offers multiple valid ways to express the same idea.

Simply calculating entropy from the raw probabilities is not enough, since there are many ways to say the same thing. But when we group answers by their actual meaning (clustering + embeddings), we get a clearer picture.

Semantic Entropy

Hₛ = -Σ P(gⱼ) log₂ P(gⱼ)

where gⱼ represents semantic groupsPractical interpretation:

- Entropy = 1 bit: model choosing between 2 equally likely options

- Entropy = 2 bits: choosing between 4 equally likely options

- Entropy = 3 bits: choosing between 8 equally likely options

- Our semantic entropy reduction (from 3.1 to 1.5 bits) shows we've reduced complexity from ~8 choices to ~3 effective choices

This brings structure to our evaluation algorithm. But we're still not completely satisfied — how can we measure the surprise factor?

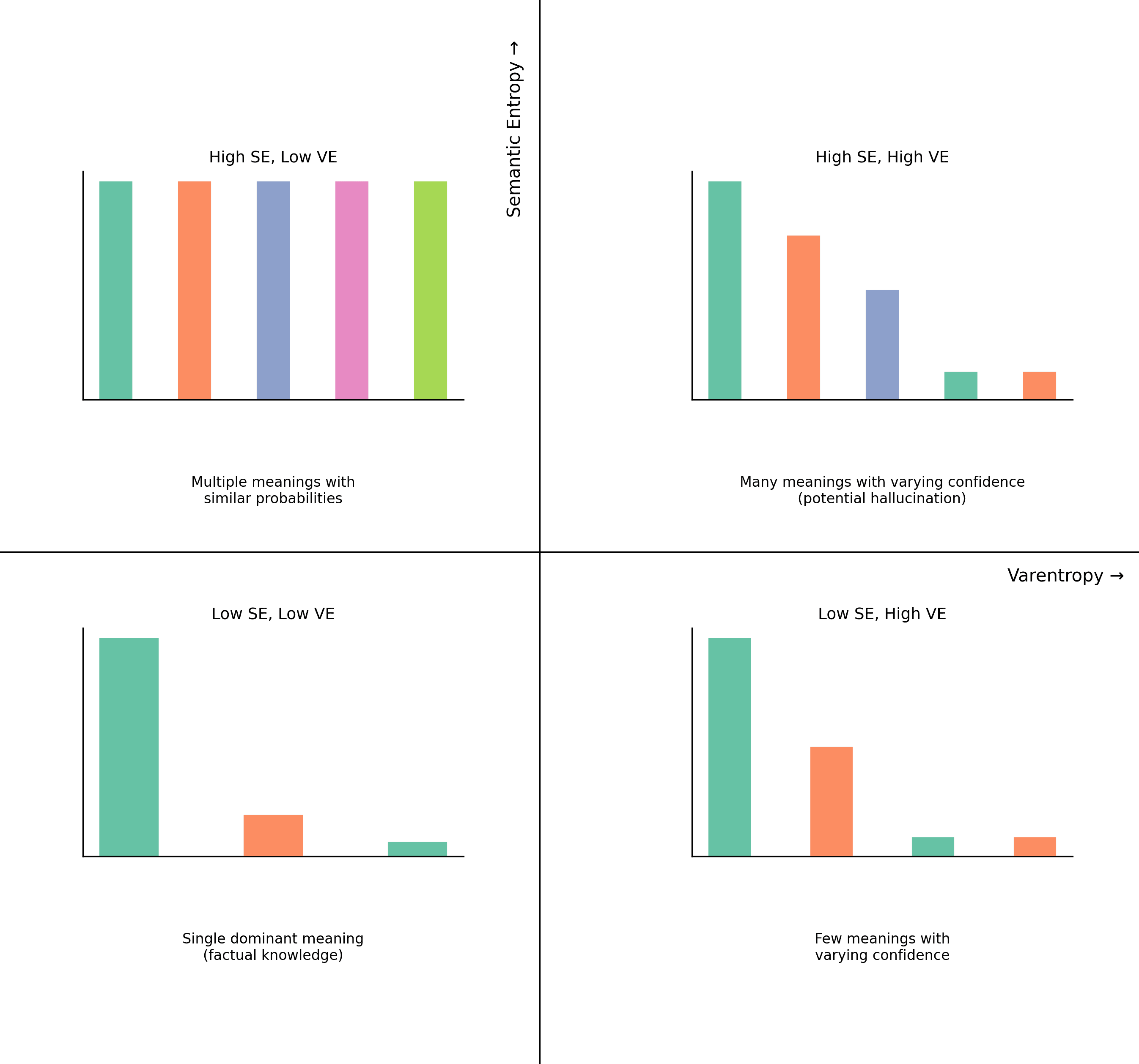

Varentropy

Semantic entropy helps us understand how many different ideas a model is considering, but it doesn't tell us about the model's relative confidence in those different options. This is where varentropy comes in — it measures how much the model's confidence varies across different token predictions.

V(X) = Σ P(xᵢ)(-log₂ P(xᵢ) - H(X))²

where:

P(xᵢ) = probability of token i

H(X) = entropy (average information content)

n = number of tokens being considered

Experiment

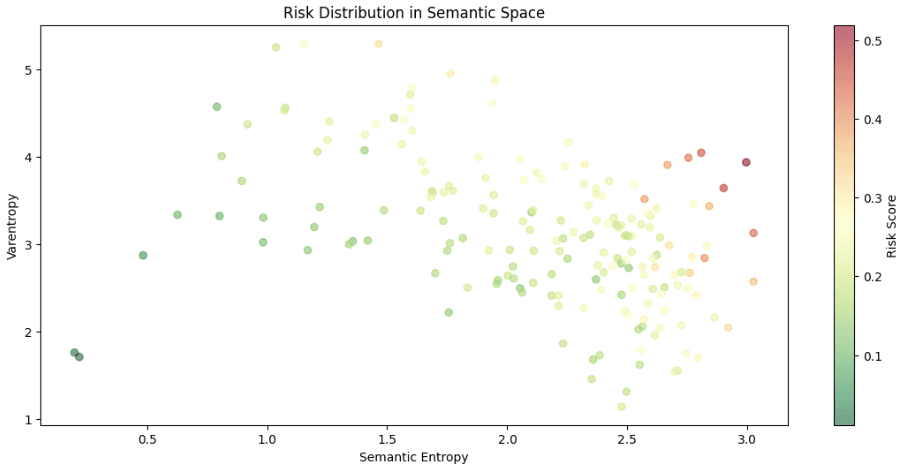

We did a comprehensive analysis to see how language models handle different types of content through the lens of semantic entropy and varentropy. We used the Qwen2.5 0.5B base model with TGI engine for inference.

Dataset:

- N=2k prompts, minimum 20 tokens per prompt

- Five categories: factual statements, hallucinations, subjective opinions, ambiguous statements, edge cases

Each prompt was carefully semi-manually crafted. The hypothesis: hallucinations would display distinctly different entropy signatures compared to factual or ambiguous statements.

Results

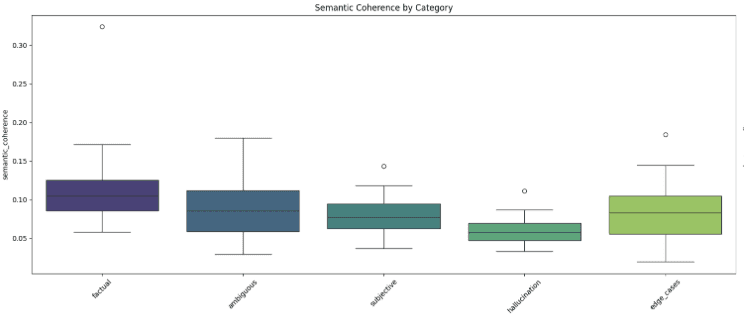

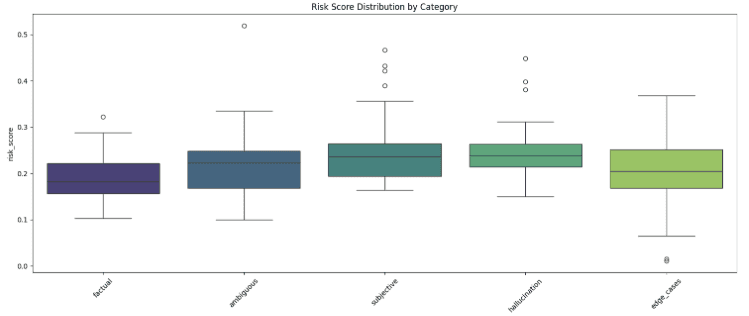

The scatter plot of Semantic Entropy vs. Varentropy reveals a clear pattern:

- Low semantic entropy (0.5–1.5) correlates with factual statements

- Higher semantic entropy (2.0–3.0) typically indicates hallucinations

- As semantic entropy increases, we see higher varentropy, suggesting increased model uncertainty

- Clear progression from low-risk (green) to high-risk (red) predictions

Key Insights

The combination of semantic entropy and varentropy proves to be a powerful tool for detecting hallucinations. When a model starts "making things up," both metrics increase significantly.

The clear separation in semantic space suggests we can reliably identify when a model is operating in factual versus hallucinatory modes.

Edge cases reveal the nuanced nature of AI reasoning — they often sit between factual and hallucinatory territories, much like human uncertainty in frontier scientific theories or philosophical questions.

Applications:

- Quality control for AI-generated content

- Real-time hallucination detection in AI systems

- Confidence scoring for AI outputs

- Better understanding of model uncertainty

Our reward models leverage these concepts enabling the reduction of uncertainty and hallucinations by 5–7x in production.

Can we teach LLMs to ask for human help when they reach their reasoning limits? Would AI self-reflection provide the same type of risk classification?